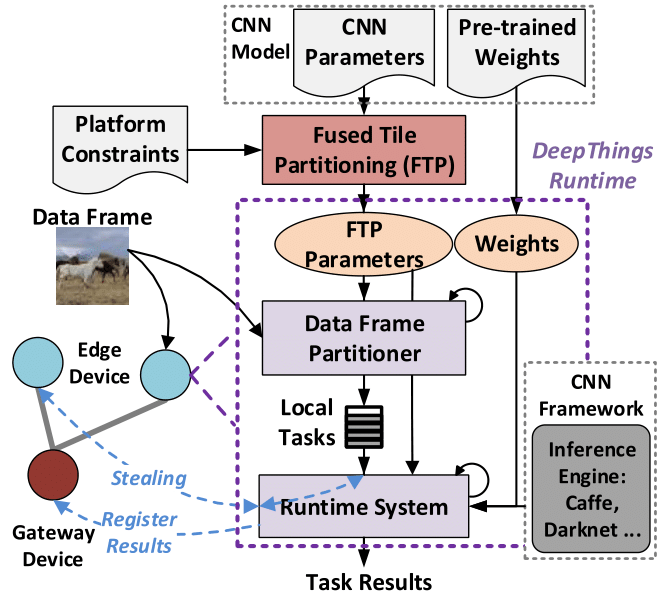

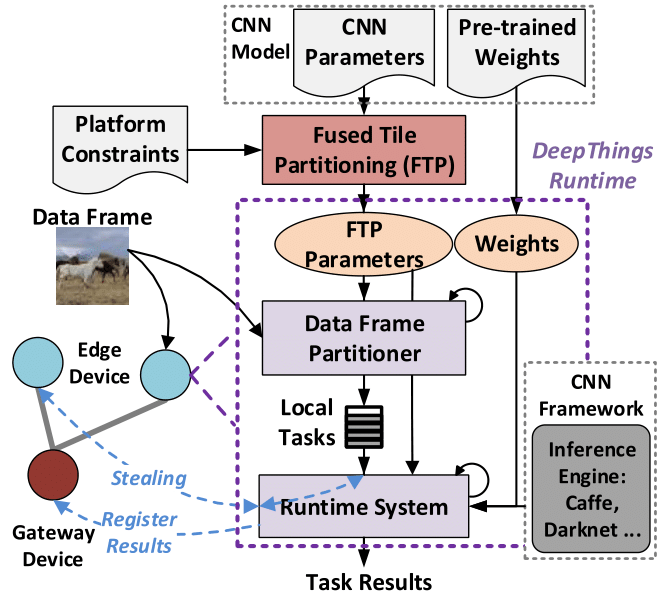

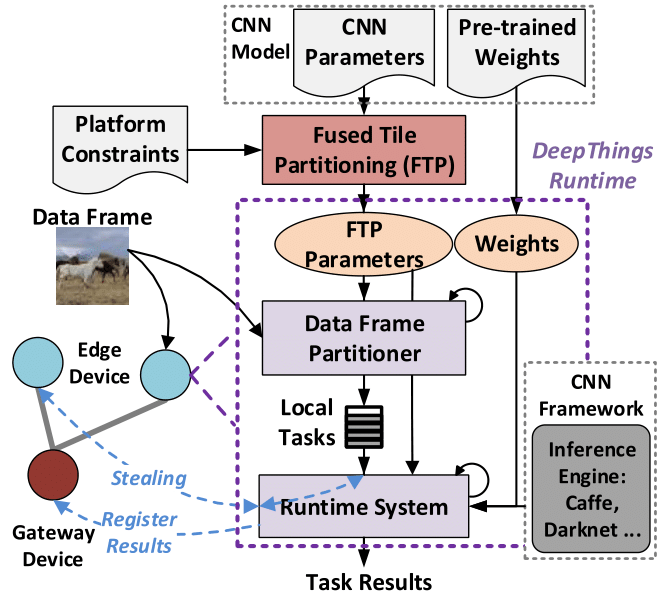

Overview of the DeepThings framework.

This repository includes a lightweight, self-contained and portable C implementation of DeepThings. It uses a [NNPACK](https://github.com/digitalbrain79/NNPACK-darknet)-accelerated [Darknet](https://github.com/zoranzhao/darknet-nnpack) as the default inference engine. More information on porting DeepThings with different inference frameworks and platforms can be found below.

## Platforms

The current implementation has been tested on [Raspberry Pi 3 Model B](https://www.raspberrypi.org/products/raspberry-pi-3-model-b/) running [Raspbian](https://www.raspberrypi.org/downloads/raspbian/).

## Building

Edit the configuration file [include/configure.h](https://github.com/zoranzhao/DeepThings/blob/master/include/configure.h) according to your IoT cluster parameters, then run:

```bash

make clean_all

make

```

This will automatically compile all related libraries and generate the DeepThings executable. If you want to run DeepThings on Raspberry Pi with NNPACK acceleration, you need first follow install [NNPACK](https://github.com/zoranzhao/darknet-nnpack/blob/2f2da6bd46b9bbfcd283e0556072f18581392f08/README.md) before running the Makefile commands, and set the options in Makefile as below:

```

NNPACK=1

ARM_NEON=1

```

## Downloading pre-trained CNN models and input data

In order to perform distributed inference, you need to download pre-trained CNN models and put it in [./models](https://github.com/zoranzhao/DeepThings/tree/master/models) folder.

Current implementation is tested with YOLOv2, which can be downloaded from [YOLOv2 model](https://github.com/zoranzhao/DeepThings/blob/master/models/yolo.cfg) and [YOLOv2 weights](https://pjreddie.com/media/files/yolo.weights). If the link doesn't work, you can also find the weights [here](https://utexas.box.com/s/ax7f0j0qwnc4yb9ghjprjd93qwk3t4uw).

For input data, images need to be numbered (starting from 0) and renamed as <#>.jpg, and placed in [./data/input](https://github.com/zoranzhao/DeepThings/tree/master/data/input) folder.

## Running in a IoT cluster

An overview of DeepThings command line options is listed below:

```bash

#./deepthings -mode