# 基于yolov3的口罩检测

**Repository Path**: luokai-dandan/mask-detection-based-on-yolov3

## Basic Information

- **Project Name**: 基于yolov3的口罩检测

- **Description**: 基于yolov3的口罩检测项目

- **Primary Language**: Python

- **License**: GPL-3.0

- **Default Branch**: master

- **Homepage**: None

- **GVP Project**: No

## Statistics

- **Stars**: 0

- **Forks**: 0

- **Created**: 2021-11-15

- **Last Updated**: 2025-09-22

## Categories & Tags

**Categories**: Uncategorized

**Tags**: Python, PyTorch, YOLOv3

## README

This repository represents Ultralytics open-source research into future object detection methods, and incorporates lessons learned and best practices evolved over thousands of hours of training and evolution on anonymized client datasets. **All code and models are under active development, and are subject to modification or deletion without notice.** Use at your own risk.

This repository represents Ultralytics open-source research into future object detection methods, and incorporates lessons learned and best practices evolved over thousands of hours of training and evolution on anonymized client datasets. **All code and models are under active development, and are subject to modification or deletion without notice.** Use at your own risk.

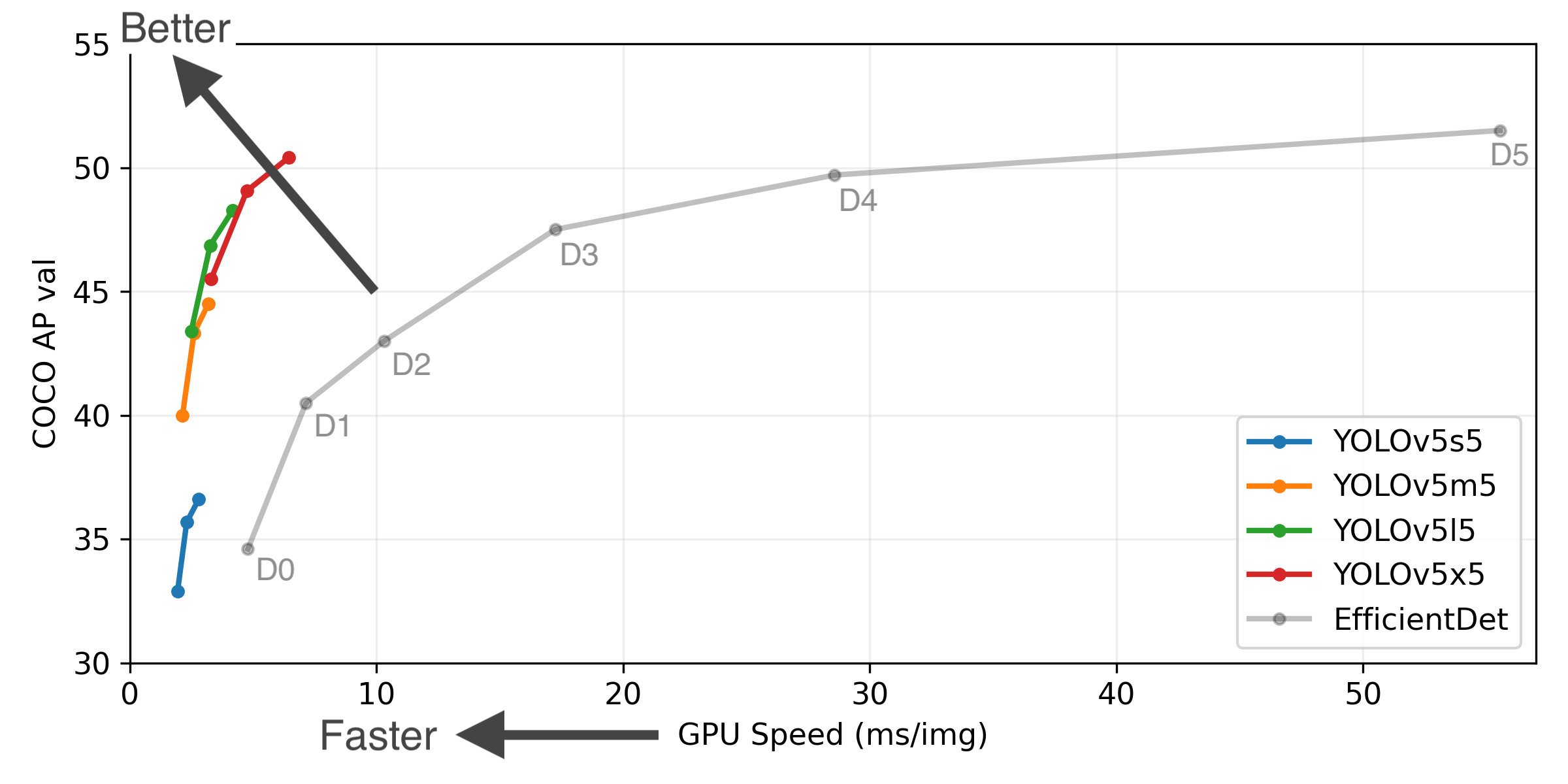

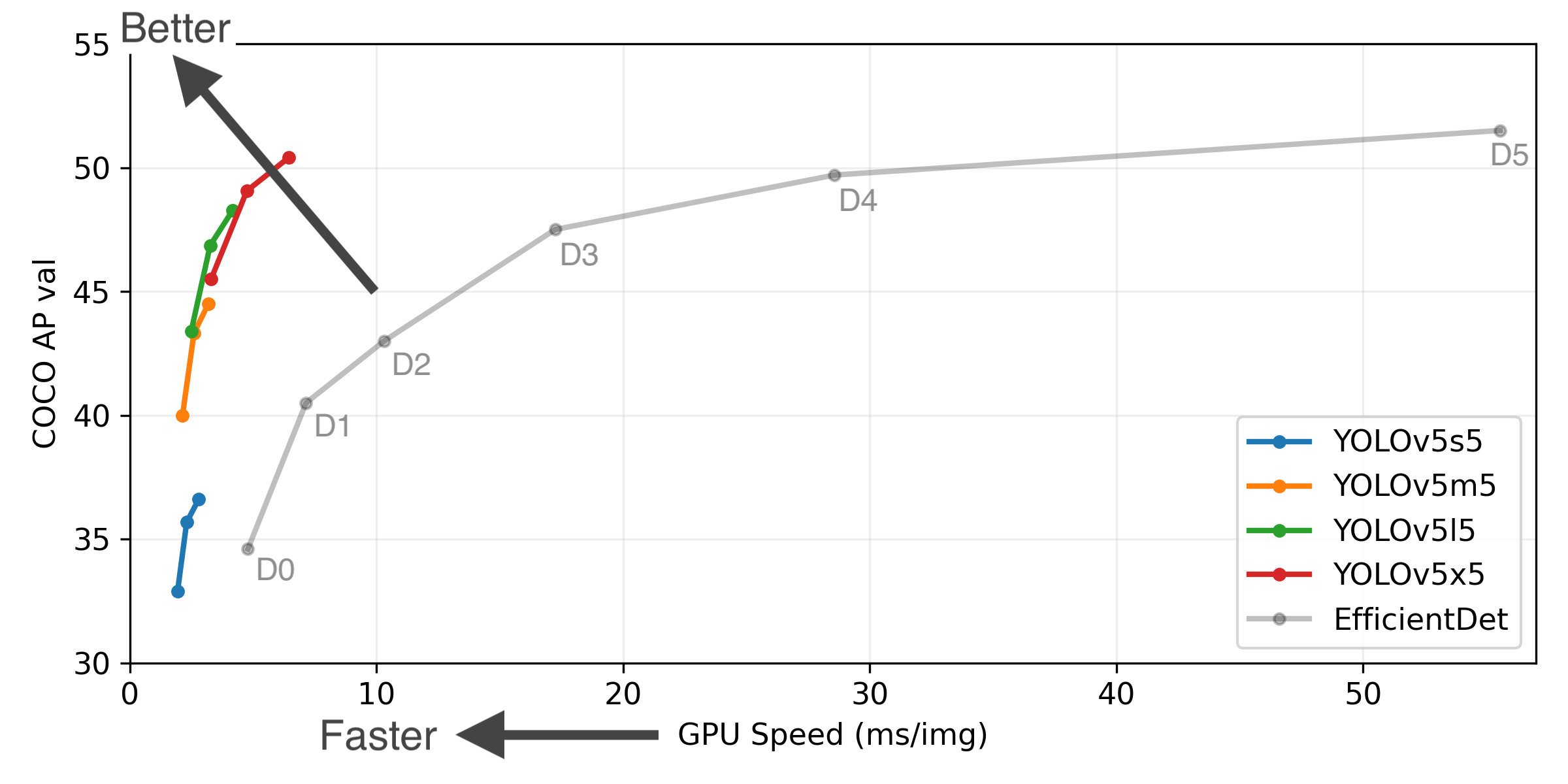

YOLOv5-P5 640 Figure (click to expand)

Figure Notes (click to expand)

* GPU Speed measures end-to-end time per image averaged over 5000 COCO val2017 images using a V100 GPU with batch size 32, and includes image preprocessing, PyTorch FP16 inference, postprocessing and NMS.

* EfficientDet data from [google/automl](https://github.com/google/automl) at batch size 8.

* **Reproduce** by `python test.py --task study --data coco.yaml --iou 0.7 --weights yolov3.pt yolov3-spp.pt yolov3-tiny.pt yolov5l.pt`

## Branch Notice

The [ultralytics/yolov3](https://github.com/ultralytics/yolov3) repository is now divided into two branches:

* [Master branch](https://github.com/ultralytics/yolov3/tree/master): Forward-compatible with all [YOLOv5](https://github.com/ultralytics/yolov5) models and methods (**recommended** ✅).

```bash

$ git clone https://github.com/ultralytics/yolov3 # master branch (default)

```

* [Archive branch](https://github.com/ultralytics/yolov3/tree/archive): Backwards-compatible with original [darknet](https://pjreddie.com/darknet/) *.cfg models (**no longer maintained** ⚠️).

```bash

$ git clone https://github.com/ultralytics/yolov3 -b archive # archive branch

```

## Pretrained Checkpoints

[assets3]: https://github.com/ultralytics/yolov3/releases

[assets5]: https://github.com/ultralytics/yolov5/releases

Model |size

(pixels) |mAPval

0.5:0.95 |mAPtest

0.5:0.95 |mAPval

0.5 |Speed

V100 (ms) | |params

(M) |FLOPS

640 (B)

--- |--- |--- |--- |--- |--- |---|--- |---

[YOLOv3-tiny][assets3] |640 |17.6 |17.6 |34.8 |**1.2** | |8.8 |13.2

[YOLOv3][assets3] |640 |43.3 |43.3 |63.0 |4.1 | |61.9 |156.3

[YOLOv3-SPP][assets3] |640 |44.3 |44.3 |64.6 |4.1 | |63.0 |157.1

| | | | | | || |

[YOLOv5l][assets5] |640 |**48.2** |**48.2** |**66.9** |3.7 | |47.0 |115.4

Table Notes (click to expand)

* APtest denotes COCO [test-dev2017](http://cocodataset.org/#upload) server results, all other AP results denote val2017 accuracy.

* AP values are for single-model single-scale unless otherwise noted. **Reproduce mAP** by `python test.py --data coco.yaml --img 640 --conf 0.001 --iou 0.65`

* SpeedGPU averaged over 5000 COCO val2017 images using a GCP [n1-standard-16](https://cloud.google.com/compute/docs/machine-types#n1_standard_machine_types) V100 instance, and includes FP16 inference, postprocessing and NMS. **Reproduce speed** by `python test.py --data coco.yaml --img 640 --conf 0.25 --iou 0.45`

* All checkpoints are trained to 300 epochs with default settings and hyperparameters (no autoaugmentation).

## Requirements

Python 3.8 or later with all [requirements.txt](https://github.com/ultralytics/yolov3/blob/master/requirements.txt) dependencies installed, including `torch>=1.7`. To install run:

```bash

$ pip install -r requirements.txt

```

## Tutorials

* [Train Custom Data](https://github.com/ultralytics/yolov3/wiki/Train-Custom-Data) 🚀 RECOMMENDED

* [Tips for Best Training Results](https://github.com/ultralytics/yolov5/wiki/Tips-for-Best-Training-Results) ☘️ RECOMMENDED

* [Weights & Biases Logging](https://github.com/ultralytics/yolov5/issues/1289) 🌟 NEW

* [Supervisely Ecosystem](https://github.com/ultralytics/yolov5/issues/2518) 🌟 NEW

* [Multi-GPU Training](https://github.com/ultralytics/yolov5/issues/475)

* [PyTorch Hub](https://github.com/ultralytics/yolov5/issues/36) ⭐ NEW

* [TorchScript, ONNX, CoreML Export](https://github.com/ultralytics/yolov5/issues/251) 🚀

* [Test-Time Augmentation (TTA)](https://github.com/ultralytics/yolov5/issues/303)

* [Model Ensembling](https://github.com/ultralytics/yolov5/issues/318)

* [Model Pruning/Sparsity](https://github.com/ultralytics/yolov5/issues/304)

* [Hyperparameter Evolution](https://github.com/ultralytics/yolov5/issues/607)

* [Transfer Learning with Frozen Layers](https://github.com/ultralytics/yolov5/issues/1314) ⭐ NEW

* [TensorRT Deployment](https://github.com/wang-xinyu/tensorrtx)

## Environments

YOLOv3 may be run in any of the following up-to-date verified environments (with all dependencies including [CUDA](https://developer.nvidia.com/cuda)/[CUDNN](https://developer.nvidia.com/cudnn), [Python](https://www.python.org/) and [PyTorch](https://pytorch.org/) preinstalled):

- **Google Colab and Kaggle** notebooks with free GPU:

- **Google Cloud** Deep Learning VM. See [GCP Quickstart Guide](https://github.com/ultralytics/yolov3/wiki/GCP-Quickstart)

- **Amazon** Deep Learning AMI. See [AWS Quickstart Guide](https://github.com/ultralytics/yolov3/wiki/AWS-Quickstart)

- **Docker Image**. See [Docker Quickstart Guide](https://github.com/ultralytics/yolov3/wiki/Docker-Quickstart)

- **Google Cloud** Deep Learning VM. See [GCP Quickstart Guide](https://github.com/ultralytics/yolov3/wiki/GCP-Quickstart)

- **Amazon** Deep Learning AMI. See [AWS Quickstart Guide](https://github.com/ultralytics/yolov3/wiki/AWS-Quickstart)

- **Docker Image**. See [Docker Quickstart Guide](https://github.com/ultralytics/yolov3/wiki/Docker-Quickstart)  ## Inference

`detect.py` runs inference on a variety of sources, downloading models automatically from the [latest YOLOv3 release](https://github.com/ultralytics/yolov3/releases) and saving results to `runs/detect`.

```bash

$ python detect.py --source 0 # webcam

file.jpg # image

file.mp4 # video

path/ # directory

path/*.jpg # glob

'https://youtu.be/NUsoVlDFqZg' # YouTube video

'rtsp://example.com/media.mp4' # RTSP, RTMP, HTTP stream

```

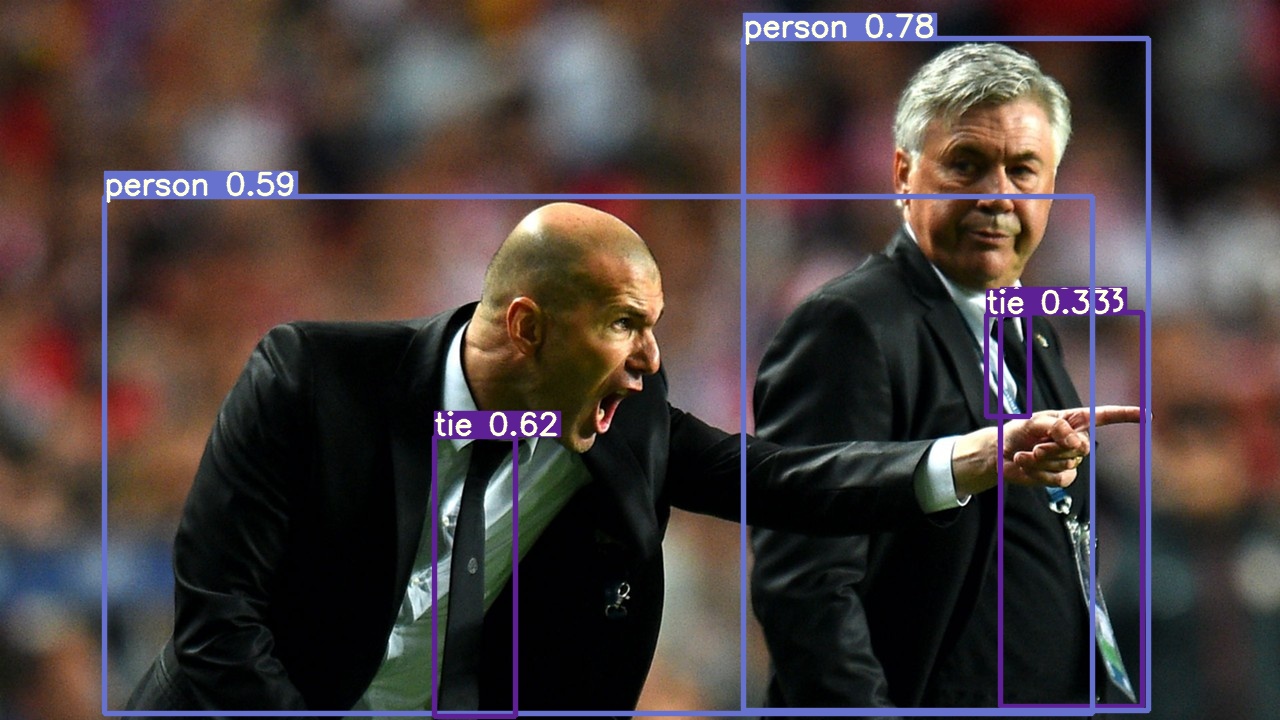

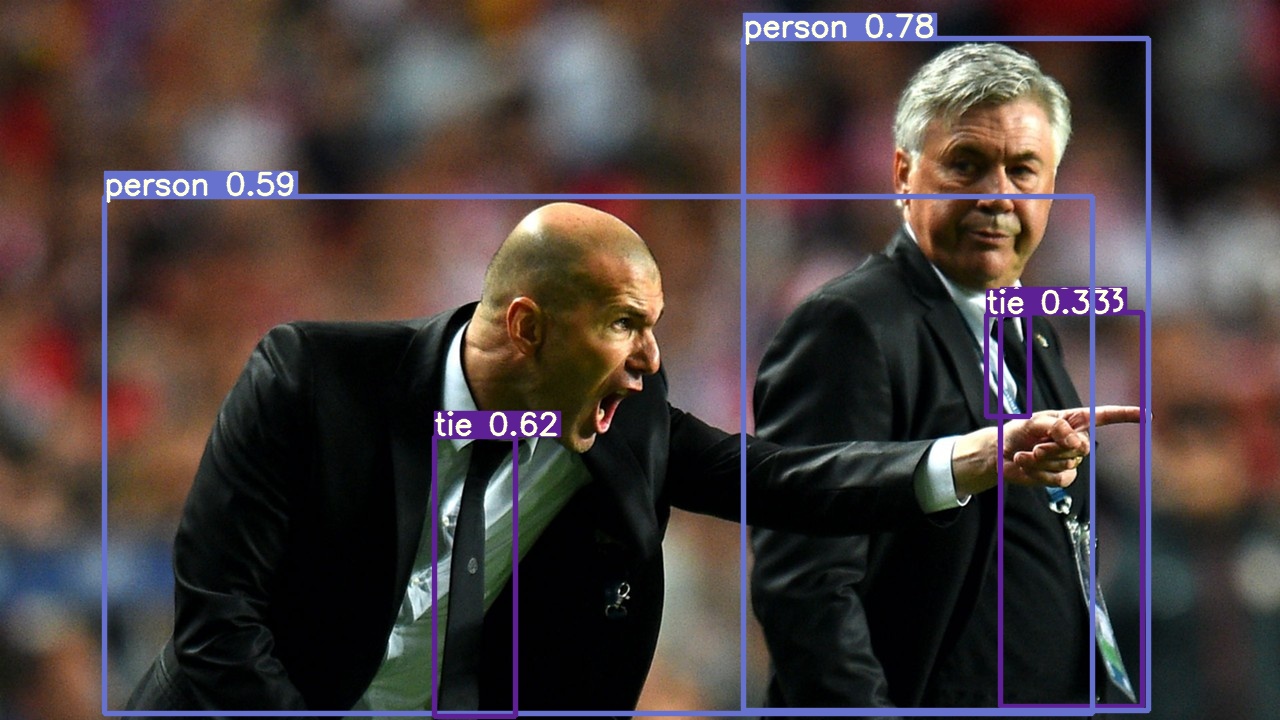

To run inference on example images in `data/images`:

```bash

$ python detect.py --source data/images --weights yolov3.pt --conf 0.25

```

## Inference

`detect.py` runs inference on a variety of sources, downloading models automatically from the [latest YOLOv3 release](https://github.com/ultralytics/yolov3/releases) and saving results to `runs/detect`.

```bash

$ python detect.py --source 0 # webcam

file.jpg # image

file.mp4 # video

path/ # directory

path/*.jpg # glob

'https://youtu.be/NUsoVlDFqZg' # YouTube video

'rtsp://example.com/media.mp4' # RTSP, RTMP, HTTP stream

```

To run inference on example images in `data/images`:

```bash

$ python detect.py --source data/images --weights yolov3.pt --conf 0.25

```

### PyTorch Hub

To run **batched inference** with YOLOv3 and [PyTorch Hub](https://github.com/ultralytics/yolov5/issues/36):

```python

import torch

# Model

model = torch.hub.load('ultralytics/yolov3', 'yolov3') # or 'yolov3_spp', 'yolov3_tiny'

# Image

img = 'https://ultralytics.com/images/zidane.jpg'

# Inference

results = model(img)

results.print() # or .show(), .save()

```

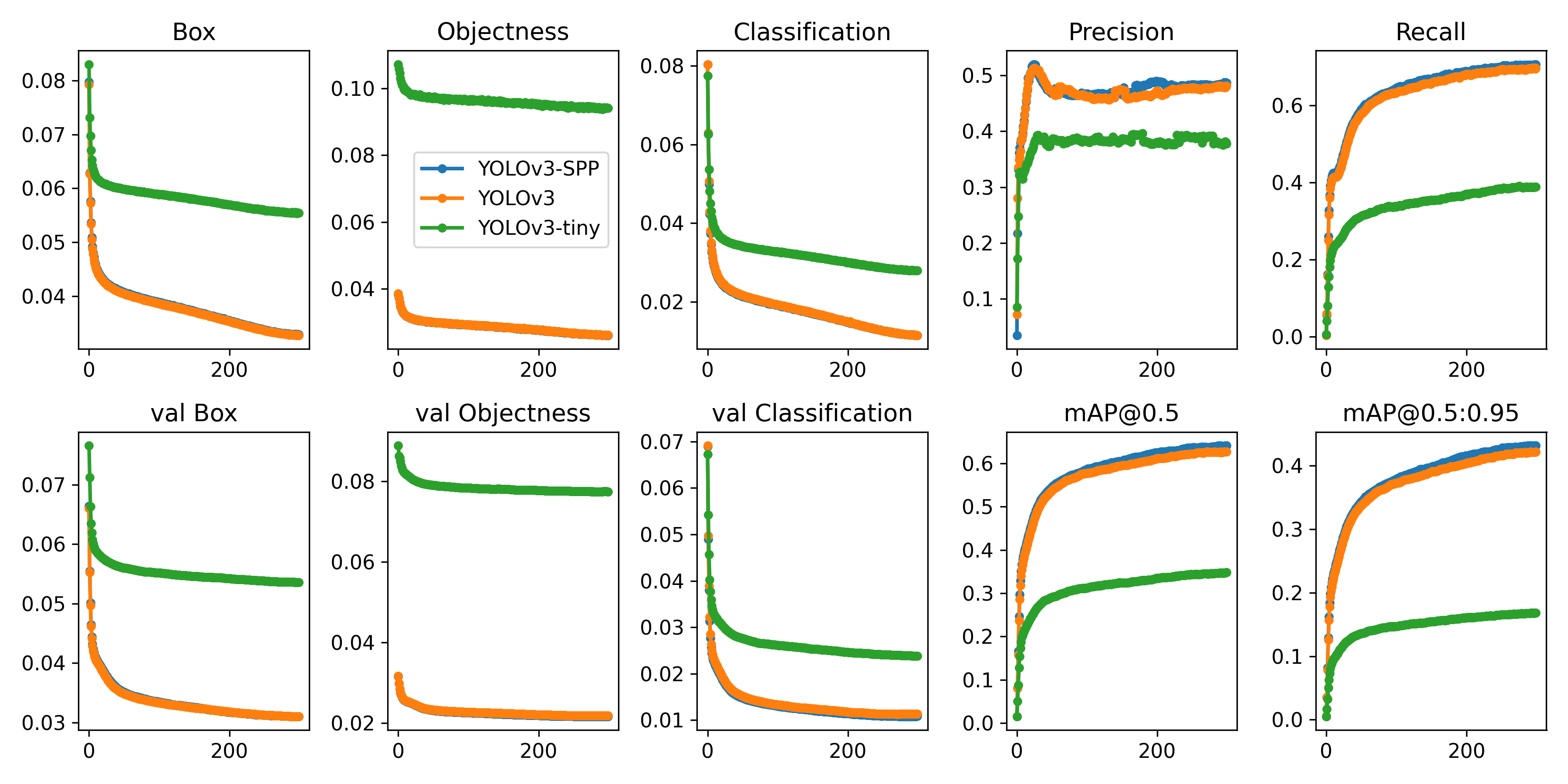

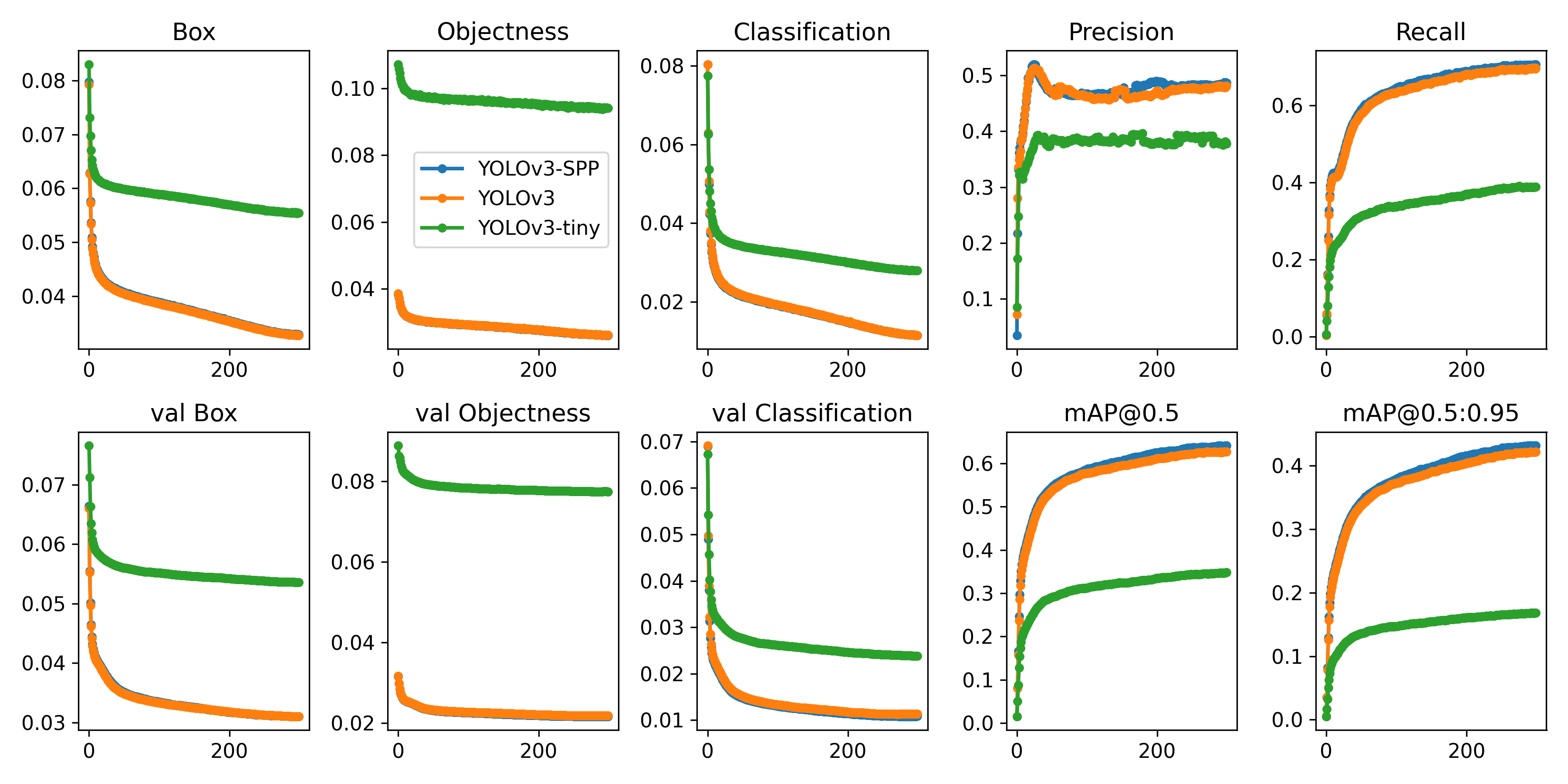

## Training

Run commands below to reproduce results on [COCO](https://github.com/ultralytics/yolov3/blob/master/data/scripts/get_coco.sh) dataset (dataset auto-downloads on first use). Training times for YOLOv3/YOLOv3-SPP/YOLOv3-tiny are 6/6/2 days on a single V100 (multi-GPU times faster). Use the largest `--batch-size` your GPU allows (batch sizes shown for 16 GB devices).

```bash

$ python train.py --data coco.yaml --cfg yolov3.yaml --weights '' --batch-size 24

yolov3-spp.yaml 24

yolov3-tiny.yaml 64

```

### PyTorch Hub

To run **batched inference** with YOLOv3 and [PyTorch Hub](https://github.com/ultralytics/yolov5/issues/36):

```python

import torch

# Model

model = torch.hub.load('ultralytics/yolov3', 'yolov3') # or 'yolov3_spp', 'yolov3_tiny'

# Image

img = 'https://ultralytics.com/images/zidane.jpg'

# Inference

results = model(img)

results.print() # or .show(), .save()

```

## Training

Run commands below to reproduce results on [COCO](https://github.com/ultralytics/yolov3/blob/master/data/scripts/get_coco.sh) dataset (dataset auto-downloads on first use). Training times for YOLOv3/YOLOv3-SPP/YOLOv3-tiny are 6/6/2 days on a single V100 (multi-GPU times faster). Use the largest `--batch-size` your GPU allows (batch sizes shown for 16 GB devices).

```bash

$ python train.py --data coco.yaml --cfg yolov3.yaml --weights '' --batch-size 24

yolov3-spp.yaml 24

yolov3-tiny.yaml 64

```

## Citation

[](https://zenodo.org/badge/latestdoi/146165888)

## About Us

Ultralytics is a U.S.-based particle physics and AI startup with over 6 years of expertise supporting government, academic and business clients. We offer a wide range of vision AI services, spanning from simple expert advice up to delivery of fully customized, end-to-end production solutions, including:

- **Cloud-based AI** systems operating on **hundreds of HD video streams in realtime.**

- **Edge AI** integrated into custom iOS and Android apps for realtime **30 FPS video inference.**

- **Custom data training**, hyperparameter evolution, and model exportation to any destination.

For business inquiries and professional support requests please visit us at https://ultralytics.com.

## Contact

**Issues should be raised directly in the repository.** For business inquiries or professional support requests please visit https://ultralytics.com or email Glenn Jocher at glenn.jocher@ultralytics.com.

## Citation

[](https://zenodo.org/badge/latestdoi/146165888)

## About Us

Ultralytics is a U.S.-based particle physics and AI startup with over 6 years of expertise supporting government, academic and business clients. We offer a wide range of vision AI services, spanning from simple expert advice up to delivery of fully customized, end-to-end production solutions, including:

- **Cloud-based AI** systems operating on **hundreds of HD video streams in realtime.**

- **Edge AI** integrated into custom iOS and Android apps for realtime **30 FPS video inference.**

- **Custom data training**, hyperparameter evolution, and model exportation to any destination.

For business inquiries and professional support requests please visit us at https://ultralytics.com.

## Contact

**Issues should be raised directly in the repository.** For business inquiries or professional support requests please visit https://ultralytics.com or email Glenn Jocher at glenn.jocher@ultralytics.com.

- **Google Cloud** Deep Learning VM. See [GCP Quickstart Guide](https://github.com/ultralytics/yolov3/wiki/GCP-Quickstart)

- **Amazon** Deep Learning AMI. See [AWS Quickstart Guide](https://github.com/ultralytics/yolov3/wiki/AWS-Quickstart)

- **Docker Image**. See [Docker Quickstart Guide](https://github.com/ultralytics/yolov3/wiki/Docker-Quickstart)

- **Google Cloud** Deep Learning VM. See [GCP Quickstart Guide](https://github.com/ultralytics/yolov3/wiki/GCP-Quickstart)

- **Amazon** Deep Learning AMI. See [AWS Quickstart Guide](https://github.com/ultralytics/yolov3/wiki/AWS-Quickstart)

- **Docker Image**. See [Docker Quickstart Guide](https://github.com/ultralytics/yolov3/wiki/Docker-Quickstart)  ## Inference

`detect.py` runs inference on a variety of sources, downloading models automatically from the [latest YOLOv3 release](https://github.com/ultralytics/yolov3/releases) and saving results to `runs/detect`.

```bash

$ python detect.py --source 0 # webcam

file.jpg # image

file.mp4 # video

path/ # directory

path/*.jpg # glob

'https://youtu.be/NUsoVlDFqZg' # YouTube video

'rtsp://example.com/media.mp4' # RTSP, RTMP, HTTP stream

```

To run inference on example images in `data/images`:

```bash

$ python detect.py --source data/images --weights yolov3.pt --conf 0.25

```

## Inference

`detect.py` runs inference on a variety of sources, downloading models automatically from the [latest YOLOv3 release](https://github.com/ultralytics/yolov3/releases) and saving results to `runs/detect`.

```bash

$ python detect.py --source 0 # webcam

file.jpg # image

file.mp4 # video

path/ # directory

path/*.jpg # glob

'https://youtu.be/NUsoVlDFqZg' # YouTube video

'rtsp://example.com/media.mp4' # RTSP, RTMP, HTTP stream

```

To run inference on example images in `data/images`:

```bash

$ python detect.py --source data/images --weights yolov3.pt --conf 0.25

```

### PyTorch Hub

To run **batched inference** with YOLOv3 and [PyTorch Hub](https://github.com/ultralytics/yolov5/issues/36):

```python

import torch

# Model

model = torch.hub.load('ultralytics/yolov3', 'yolov3') # or 'yolov3_spp', 'yolov3_tiny'

# Image

img = 'https://ultralytics.com/images/zidane.jpg'

# Inference

results = model(img)

results.print() # or .show(), .save()

```

## Training

Run commands below to reproduce results on [COCO](https://github.com/ultralytics/yolov3/blob/master/data/scripts/get_coco.sh) dataset (dataset auto-downloads on first use). Training times for YOLOv3/YOLOv3-SPP/YOLOv3-tiny are 6/6/2 days on a single V100 (multi-GPU times faster). Use the largest `--batch-size` your GPU allows (batch sizes shown for 16 GB devices).

```bash

$ python train.py --data coco.yaml --cfg yolov3.yaml --weights '' --batch-size 24

yolov3-spp.yaml 24

yolov3-tiny.yaml 64

```

### PyTorch Hub

To run **batched inference** with YOLOv3 and [PyTorch Hub](https://github.com/ultralytics/yolov5/issues/36):

```python

import torch

# Model

model = torch.hub.load('ultralytics/yolov3', 'yolov3') # or 'yolov3_spp', 'yolov3_tiny'

# Image

img = 'https://ultralytics.com/images/zidane.jpg'

# Inference

results = model(img)

results.print() # or .show(), .save()

```

## Training

Run commands below to reproduce results on [COCO](https://github.com/ultralytics/yolov3/blob/master/data/scripts/get_coco.sh) dataset (dataset auto-downloads on first use). Training times for YOLOv3/YOLOv3-SPP/YOLOv3-tiny are 6/6/2 days on a single V100 (multi-GPU times faster). Use the largest `--batch-size` your GPU allows (batch sizes shown for 16 GB devices).

```bash

$ python train.py --data coco.yaml --cfg yolov3.yaml --weights '' --batch-size 24

yolov3-spp.yaml 24

yolov3-tiny.yaml 64

```

## Citation

[](https://zenodo.org/badge/latestdoi/146165888)

## About Us

Ultralytics is a U.S.-based particle physics and AI startup with over 6 years of expertise supporting government, academic and business clients. We offer a wide range of vision AI services, spanning from simple expert advice up to delivery of fully customized, end-to-end production solutions, including:

- **Cloud-based AI** systems operating on **hundreds of HD video streams in realtime.**

- **Edge AI** integrated into custom iOS and Android apps for realtime **30 FPS video inference.**

- **Custom data training**, hyperparameter evolution, and model exportation to any destination.

For business inquiries and professional support requests please visit us at https://ultralytics.com.

## Contact

**Issues should be raised directly in the repository.** For business inquiries or professional support requests please visit https://ultralytics.com or email Glenn Jocher at glenn.jocher@ultralytics.com.

## Citation

[](https://zenodo.org/badge/latestdoi/146165888)

## About Us

Ultralytics is a U.S.-based particle physics and AI startup with over 6 years of expertise supporting government, academic and business clients. We offer a wide range of vision AI services, spanning from simple expert advice up to delivery of fully customized, end-to-end production solutions, including:

- **Cloud-based AI** systems operating on **hundreds of HD video streams in realtime.**

- **Edge AI** integrated into custom iOS and Android apps for realtime **30 FPS video inference.**

- **Custom data training**, hyperparameter evolution, and model exportation to any destination.

For business inquiries and professional support requests please visit us at https://ultralytics.com.

## Contact

**Issues should be raised directly in the repository.** For business inquiries or professional support requests please visit https://ultralytics.com or email Glenn Jocher at glenn.jocher@ultralytics.com.