# arbitrary_style_transfer

**Repository Path**: xiajw06/arbitrary_style_transfer

## Basic Information

- **Project Name**: arbitrary_style_transfer

- **Description**: Fast Neural Style Transfer with Arbitrary Style using AdaIN Layer - Based on Huang et al. "Arbitrary Style Transfer in Real-time with Adaptive Instance Normalization"

- **Primary Language**: Unknown

- **License**: MIT

- **Default Branch**: master

- **Homepage**: None

- **GVP Project**: No

## Statistics

- **Stars**: 0

- **Forks**: 0

- **Created**: 2020-01-13

- **Last Updated**: 2020-12-19

## Categories & Tags

**Categories**: Uncategorized

**Tags**: None

## README

# Arbitrary-Style-Transfer

Arbitrary-Style-Per-Model Fast Neural Style Transfer Method

## Description

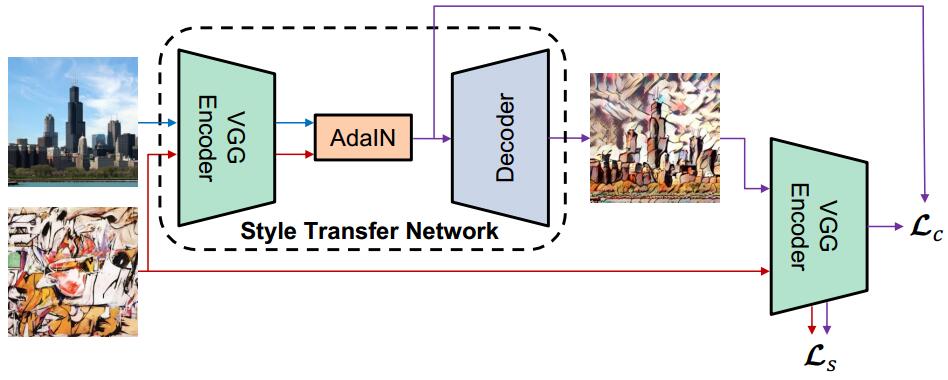

Using an Encoder-AdaIN-Decoder architecture - Deep Convolutional Neural Network as a Style Transfer Network (STN) which can receive two arbitrary images as inputs (one as content, the other one as style) and output a generated image that recombines the content and spatial structure from the former and the style (color, texture) from the latter without re-training the network. The STN is trained using MS-COCO dataset (about 12.6GB) and WikiArt dataset (about 36GB).

This code is based on Huang et al. [Arbitrary Style Transfer in Real-time with Adaptive Instance Normalization](https://arxiv.org/pdf/1703.06868.pdf) *(ICCV 2017)*

System overview. Picture comes from Huang et al. original paper. The encoder is a fixed VGG-19 (up to relu4_1) which is pre-trained on ImageNet dataset for image classification. We train the decoder to invert the AdaIN output from feature spaces back to the image spaces.

## Prerequisites

- [Pre-trained VGG19 normalised network](https://s3.amazonaws.com/xunhuang-public/adain/vgg_normalised.t7) (MD5 `c637adfa9cee4b33b59c5a754883ba82`)

I have provided a convertor in the `tool` folder. It can extract kernel and bias from the torch model file (.t7 format) and save them into a npz file which is easier to process via NumPy.

Or you can simply download my pre-processed file:

[Pre-trained VGG19 normalised network npz format](https://s3-us-west-2.amazonaws.com/wengaoye/vgg19_normalised.npz) (MD5 `c5c961738b134ffe206e0a552c728aea`)

- [Microsoft COCO dataset](http://msvocds.blob.core.windows.net/coco2014/train2014.zip)

- [WikiArt dataset](https://www.kaggle.com/c/painter-by-numbers)

## Trained Model

You can download my trained model from [here](https://s3-us-west-2.amazonaws.com/wengaoye/arbitrary_style_model_style-weight-2e0.zip) which is trained with style weight equal to 2.0

Or you can directly use `download_trained_model.sh` in the repo.

## Manual

- The main file `main.py` is a demo, which has already contained training procedure and inferring procedure (inferring means generating stylized images).

You can switch these two procedures by changing the flag `IS_TRAINING`.

- By default,

(1) The content images lie in the folder `"./images/content/"`

(2) The style images lie in the folder `"./images/style/"`

(3) The weights file of the pre-trained VGG-19 lies in the current working directory. (See `Prerequisites` above. By the way, `download_vgg19.sh` already takes care of this.)

(4) The MS-COCO images dataset for training lies in the folder `"../MS_COCO/"` (See `Prerequisites` above)

(5) The WikiArt images dataset for training lies in the folder `"../WikiArt/"` (See `Prerequisites` above)

(6) The checkpoint files of trained models lie in the folder `"./models/"` (You should create this folder manually before training.)

(7) After inferring procedure, the stylized images will be generated and output to the folder `"./outputs/"`

- For training, you should make sure (3), (4), (5) and (6) are prepared correctly.

- For inferring, you should make sure (1), (2), (3) and (6) are prepared correctly.

- Of course, you can organize all the files and folders as you want, and what you need to do is just modifying related parameters in the `main.py` file.

## Results

| style | output (generated image) |

| :----: | :----: |

||  |

||  |

||  |

||  |

||  |

||  |

||  |

||  |

||  |

||  |

||  |

||  |

||  |

||  |

||  |

||  |

||  |

||  |

||  |

## My Running Environment

Hardware

- CPU: Intel® Core™ i9-7900X (3.30GHz x 10 cores, 20 threads)

- GPU: NVIDIA® Titan Xp (Architecture: Pascal, Frame buffer: 12GB)

- Memory: 32GB DDR4

Operating System

- ubuntu 16.04.03 LTS

Software

- Python 3.6.2

- NumPy 1.13.1

- TensorFlow 1.3.0

- SciPy 0.19.1

- CUDA 8.0.61

- cuDNN 6.0.21

## References

- The Encoder which is implemented with first few layers(up to relu4_1) of a pre-trained VGG-19 is based on [Anish Athalye's vgg.py](https://github.com/anishathalye/neural-style/blob/master/vgg.py)

## Citation

```

@misc{ye2017arbitrarystyletransfer,

author = {Wengao Ye},

title = {Arbitrary Style Transfer},

year = {2017},

publisher = {GitHub},

journal = {GitHub repository},

howpublished = {\url{https://github.com/elleryqueenhomels/arbitrary_style_transfer}}

}

```