| name | about | labels |

|---|---|---|

| Bug Report | Use this template for reporting a bug | kind/bug |

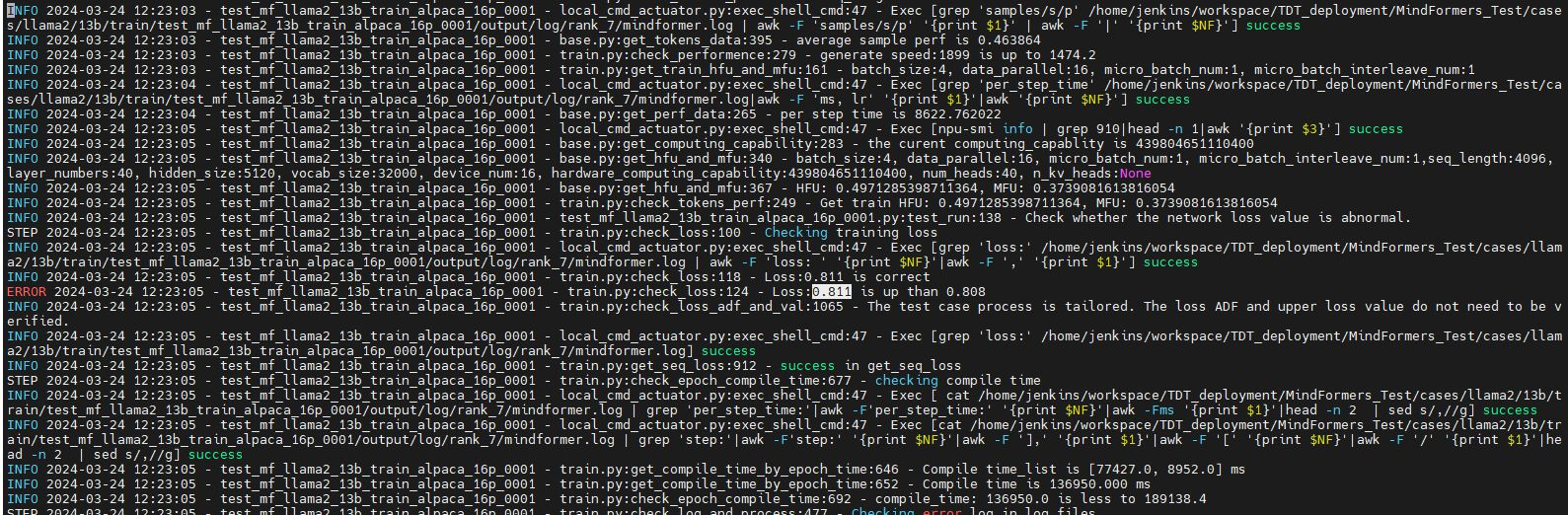

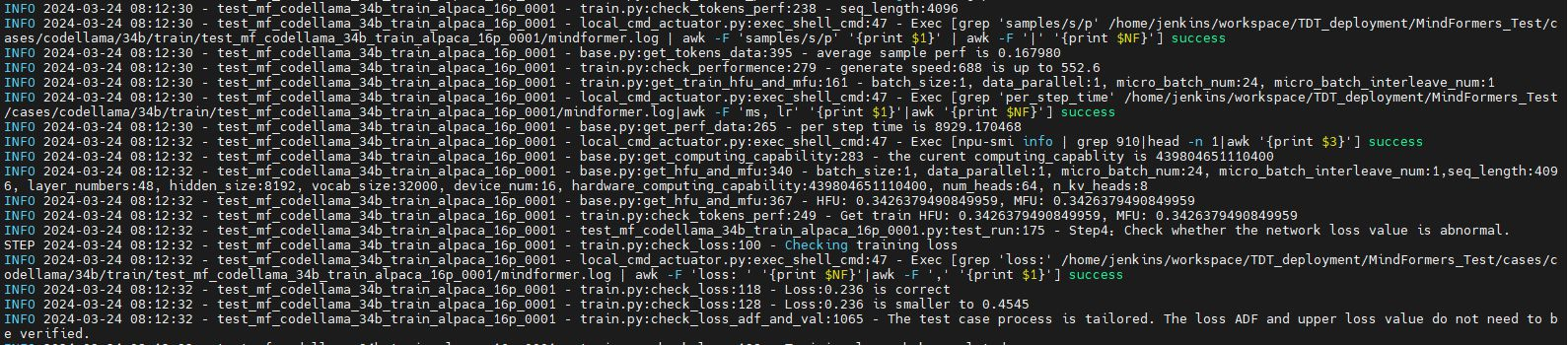

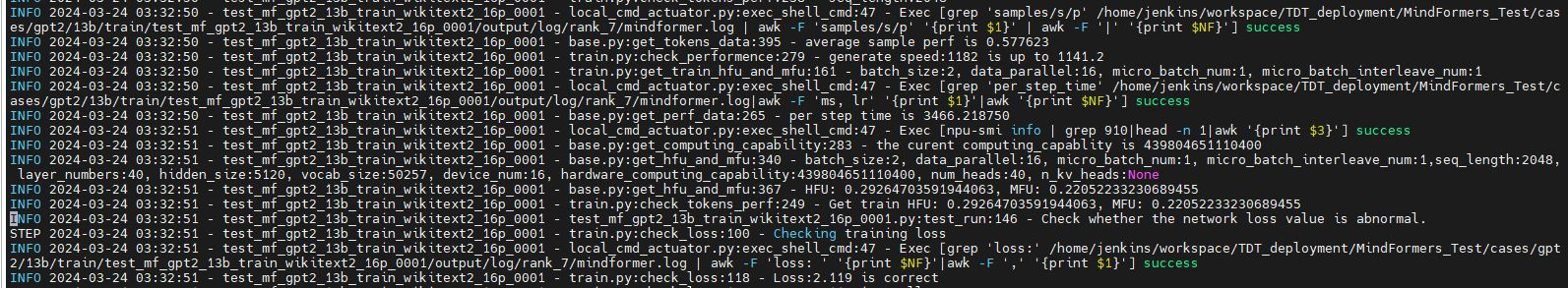

llama2/gpt2/codellama网络在910B1环境上训练失败

模型仓地址:https://gitee.com/mindspore/mindformers/blob/dev/docs/model_cards/llama2.md

Ascend/GPU/CPU) / 硬件环境:Please delete the backend not involved / 请删除不涉及的后端:

/device ascend/

失败版本

cann:Milan_C17/20240308

MS:r2.3_20240318021514_647c66237

MF:dev_20240318121523_4a844784a3b

PyNative/Graph):Please delete the mode not involved / 请删除不涉及的模式:

/mode pynative

/mode graph

用例仓地址:MindFormers_Test/cases/llama2/13b/train/

用例:

test_mf_llama2_13b_train_alpaca_16p_0001

bash run_distribute.sh /home/workspace/config/hccl_16p.json ./configs/llama2/run_llama2_13b_910b_finetune.yaml [8,16] finetune 16

网络训练成功

[ERROR] KERNEL(2671083,ffff951de480,python):2024-03-19-11:53:08.037.101 [mindspore/ccsrc/plugin/factory/ms_factory.h:53] Register] Kernel NotEqual is already registered!

[WARNING] GE_ADPT(2671083,ffff951de480,python):2024-03-19-11:53:08.103.617 [mindspore/ccsrc/transform/symbol/acl_tdt_symbol.cc:49] LoadAcltdtApiSymbol] Dlopen /usr/local/Ascend/latest/lib64/libacl_tdt_channel.so failed!libacl_tdt_queue.so: cannot open shared object file: No such file or directory

2024-03-19 11:53:14,103 - mindformers[mindformers/tools/utils.py:153] - INFO - set output path to '/home/jenkins0/MindFormers_Test/cases/llama2/13b/train/test_mf_llama2_13b_train_alpaca_16p_0001/output'

[WARNING] DEVICE(2671083,ffff951de480,python):2024-03-19-11:53:14.284.288 [mindspore/ccsrc/plugin/device/ascend/hal/device/ascend_memory_adapter.cc:95] Initialize] Reserved memory size for other components(3187671040) is less than recommend size(4073724928), It may lead to Out Of Memory in HCCL or other components, Please double check context key 'variable_memory_max_size'/'max_device_memory'

2024-03-19 11:53:31,012 - mindformers[mindformers/tools/cloud_adapter/cloud_monitor.py:43] - ERROR - Traceback (most recent call last):

File "/home/jenkins0/MindFormers_Test/cases/llama2/13b/train/test_mf_llama2_13b_train_alpaca_16p_0001/scripts/mf_parallel15/mindformers/core/context/build_context.py", line 116, in init_context

init()

File "/home/miniconda3/envs/ci/lib/python3.7/site-packages/mindspore/communication/management.py", line 188, in init

init_hccl()

RuntimeError: acltdtCreateChannelWithCapacity is null.

----------------------------------------------------

- C++ Call Stack: (For framework developers)

----------------------------------------------------

mindspore/ccsrc/transform/symbol/symbol_utils.h:26 RunAscendApi

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/home/jenkins0/MindFormers_Test/cases/llama2/13b/train/test_mf_llama2_13b_train_alpaca_16p_0001/scripts/mf_parallel15/mindformers/tools/cloud_adapter/cloud_monitor.py", line 34, in wrapper

result = run_func(*args, **kwargs)

File "run_mindformer.py", line 35, in main

build_context(config)

File "/home/jenkins0/MindFormers_Test/cases/llama2/13b/train/test_mf_llama2_13b_train_alpaca_16p_0001/scripts/mf_parallel15/mindformers/core/context/build_context.py", line 44, in build_context

context_config=config.context, parallel_config=config.parallel)

File "/home/jenkins0/MindFormers_Test/cases/llama2/13b/train/test_mf_llama2_13b_train_alpaca_16p_0001/scripts/mf_parallel15/mindformers/core/context/build_context.py", line 118, in init_context

raise RuntimeError("Notice: if you are trying to run with a single device, please set "

RuntimeError: Notice: if you are trying to run with a single device, please set use_parallel=False. If not, please check the error message above.

Traceback (most recent call last):

File "/home/jenkins0/MindFormers_Test/cases/llama2/13b/train/test_mf_llama2_13b_train_alpaca_16p_0001/scripts/mf_parallel15/mindformers/core/context/build_context.py", line 116, in init_context

init()

File "/home/miniconda3/envs/ci/lib/python3.7/site-packages/mindspore/communication/management.py", line 188, in init

init_hccl()

RuntimeError: acltdtCreateChannelWithCapacity is null.

----------------------------------------------------

- C++ Call Stack: (For framework developers)

----------------------------------------------------

mindspore/ccsrc/transform/symbol/symbol_utils.h:26 RunAscendApi

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "run_mindformer.py", line 267, in <module>

main(config_)

File "/home/jenkins0/MindFormers_Test/cases/llama2/13b/train/test_mf_llama2_13b_train_alpaca_16p_0001/scripts/mf_parallel15/mindformers/tools/cloud_adapter/cloud_monitor.py", line 44, in wrapper

raise exc

File "/home/jenkins0/MindFormers_Test/cases/llama2/13b/train/test_mf_llama2_13b_train_alpaca_16p_0001/scripts/mf_parallel15/mindformers/tools/cloud_adapter/cloud_monitor.py", line 34, in wrapper

result = run_func(*args, **kwargs)

File "run_mindformer.py", line 35, in main

build_context(config)

File "/home/jenkins0/MindFormers_Test/cases/llama2/13b/train/test_mf_llama2_13b_train_alpaca_16p_0001/scripts/mf_parallel15/mindformers/core/context/build_context.py", line 44, in build_context

context_config=config.context, parallel_config=config.parallel)

File "/home/jenkins0/MindFormers_Test/cases/llama2/13b/train/test_mf_llama2_13b_train_alpaca_16p_0001/scripts/mf_parallel15/mindformers/core/context/build_context.py", line 118, in init_context

raise RuntimeError("Notice: if you are trying to run with a single device, please set "

RuntimeError: Notice: if you are trying to run with a single device, please set use_parallel=False. If not, please check the error message above.

Error in atexit._run_exitfuncs:

RuntimeError: The pointer[runtime_instance_] is null.

----------------------------------------------------

- Framework Unexpected Exception Raised:

----------------------------------------------------

This exception is caused by framework's unexpected error. Please create an issue at https://gitee.com/mindspore/mindspore/issues to get help.

----------------------------------------------------

- C++ Call Stack: (For framework developers)

----------------------------------------------------

mindspore/ccsrc/plugin/device/ascend/hal/hardware/ge_device_res_manager.h:82 GetStream

free(): double free detected in tcache 2

走给冯浩

Please assign maintainer to check this issue.

请为此issue分配处理人。

@zhangjie18

此处可能存在不合适展示的内容,页面不予展示。您可通过相关编辑功能自查并修改。

如您确认内容无涉及 不当用语 / 纯广告导流 / 暴力 / 低俗色情 / 侵权 / 盗版 / 虚假 / 无价值内容或违法国家有关法律法规的内容,可点击提交进行申诉,我们将尽快为您处理。

感谢您的提问,您可以评论//mindspore-assistant更快获取帮助:

CANN包环境问题

重复问题,同原问题版本信息走回归

https://e.gitee.com/mind_spore/issues/table?issue=I99KSO

回归版本:CANN:Milan_C17/20240321

MS:r2.3.q1_20240322204955_5a94f41a4670cc(2.3.B090)

MF:r1.1.tr5_20240322145526_e61f4a0e72e69

回归步骤:参考issue步骤

基本问题:已解决

测试结论:回归通过

回归时间:2024.3.26

登录 后才可以发表评论